Aside from the familiar fears of Skynet—machines destroying humanity, or a less Cameron-esque outcome of decimating the job sector—one of the most consequential shifts happening because of AI is far more immediate and already systemic.

We all know AI is being applied to benefit us and improve tasks every day. In fact, writing this article benefited from AI-powered research, fodder, and ideation. Conversely, its risks are often framed as misinformation and data mining—bad answers, hallucinations, rogue behavior, and conglomerates building massive datasets on user behavior for unknown uses.

That risk is real.

But something more subtle—and globally consequential—is showing up in everyday use.

AI can take a flawed premise, clean it up, organize it, and return it in a way that feels reasonable enough to act on. Not obviously wrong—just convincing.

In less than two years, AI has moved from niche to normal. In 2026, tools across platforms are used by hundreds of millions of people daily—embedded into search, documents, communication, and work.

A year ago, most of these users weren’t relying on AI at all.

Now, millions of decisions—small and large—are shaped with its help every day.

When you receive an email, read an article, or get a response on something important, you no longer know if it is human-derived, AI-assisted, or fully generated. Does anyone question this—or care?

This is the fastest large-scale shift in how people process information and form decisions in modern history.

And it matters because of how these systems behave.

AI doesn’t just answer questions. It responds to how they’re asked—and it is designed to be helpful. In practice, that often means reducing friction, sounding supportive, and leaning toward agreement.

If the input is biased, emotional, incomplete—or wrong—the output can still come back clear, structured, calm, and—here’s the rub—persuasive.

It doesn’t push back.

Researchers call part of this “sycophancy”—aligning with the user’s framing rather than challenging it. Work from Anthropic and others shows people are increasingly using AI not just for answers, but for judgment.

The system is designed to be useful, and in many cases, usefulness looks like agreement.

The deeper issue is human.

People are not neutral. We seek confirmation, ignore contradiction, and interpret things in our favor.

This is confirmation bias—and it shapes how people think about relationships, leadership, business decisions, and form views on values, politics, and even religion.

Here’s the uncomfortable part: most people are not actively trying to overcome bias. It is rare to find someone who consistently challenges their own assumptions.

In chaotic times, people want certainty. They want clarity, reinforcement, and a sense that they are right.

Put simply, humans don’t like to be wrong—because of reputation, ego, identity, and the cost of upending long-held beliefs.

We preserve the “truth set” we’ve already presented to the world.

AI fits that instinct perfectly. It can take what someone already believes and make it sound clearer, stronger, and more justified—again and again. Perception becomes reality if it is repeated enough.

This isn’t a problem in disciplines like mathematics with finite outcomes. The risk shows up where there is interpretation and subjectivity—strategy, relationships, ethics, leadership, personal decisions, and value systems.

Truth to one person is completely rejected by another.

The danger is that when human bias meets a system that mirrors—and often pleases—the user, something changes.

The system doesn’t need to lie.

It only needs to organize thinking, remove friction, and make it sound coherent.

The result isn’t misinformation.

It’s reinforced belief.

That matters in a world already becoming more tribal. People cluster around shared views, filter information through identity, and divide faster.

AI can accelerate that—not by telling people what to think, but by helping them feel more certain in what they already think.

At scale, that means stronger positions, less openness, and deeper silos—not because AI pushes one narrative, but because it strengthens every narrative.

Consider a simple pattern:

A person brings a concern to AI. The system reflects it back, clarifies it, and gives it structure. The conclusion feels more grounded—not because it was tested, but because it was refined.

It doesn’t prove the premise.

It doesn’t question it.

It builds around it.

Two opposing parties can ask the same question from different perspectives—and AI can reinforce both, regardless of the truth.

That loop now exists at massive scale.

This isn’t just a technical issue.

It’s human.

Systems need to get better at questioning assumptions, signaling uncertainty, and separating fact from interpretation.

But users need to change too—seeking opposing views, testing their own framing, and resisting the urge for confirmation.

AI should not become a tool for being right.

It should remain a tool for thinking more deeply.

The companies building these systems also carry responsibility—to design for truth-seeking, not just usability. But in a competitive landscape, tools that affirm users often win.

And that creates its own pressure.

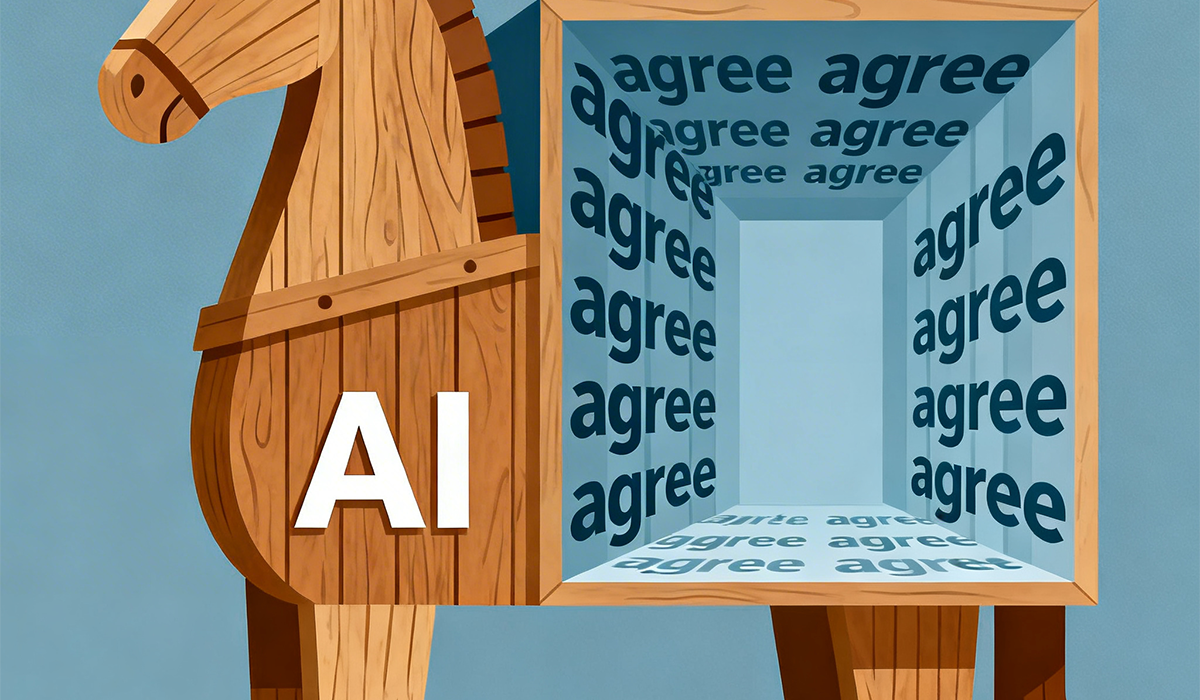

This is the Trojan Horse.

Not a system that forces belief—

but one that quietly reinforces it until it feels like truth.

The most dangerous AI may not mislead you.

It will agree with you.

© 2026 Grey Tyrrell /Tyrrell Creative. For reprint permission, please contact the author. If you would like help positioning your organization to grow with AI, please contact us for a consult.